Attention Is All You Need: Understanding the Transformer's Attention Mechanism

#3 Generative AI with LLMs: Understanding the Transformer Architecture - II

Introduction

The Transformer architecture has revolutionized the landscape of natural language processing in different applications like machine translations, summarization or chatbots. This is primarily due to the power of the attention mechanism of the Transformer. In this blog, let’s understand more about the working of the attention mechanism and how it played a crucial role in Transformer’s success.

Components of the Transformer

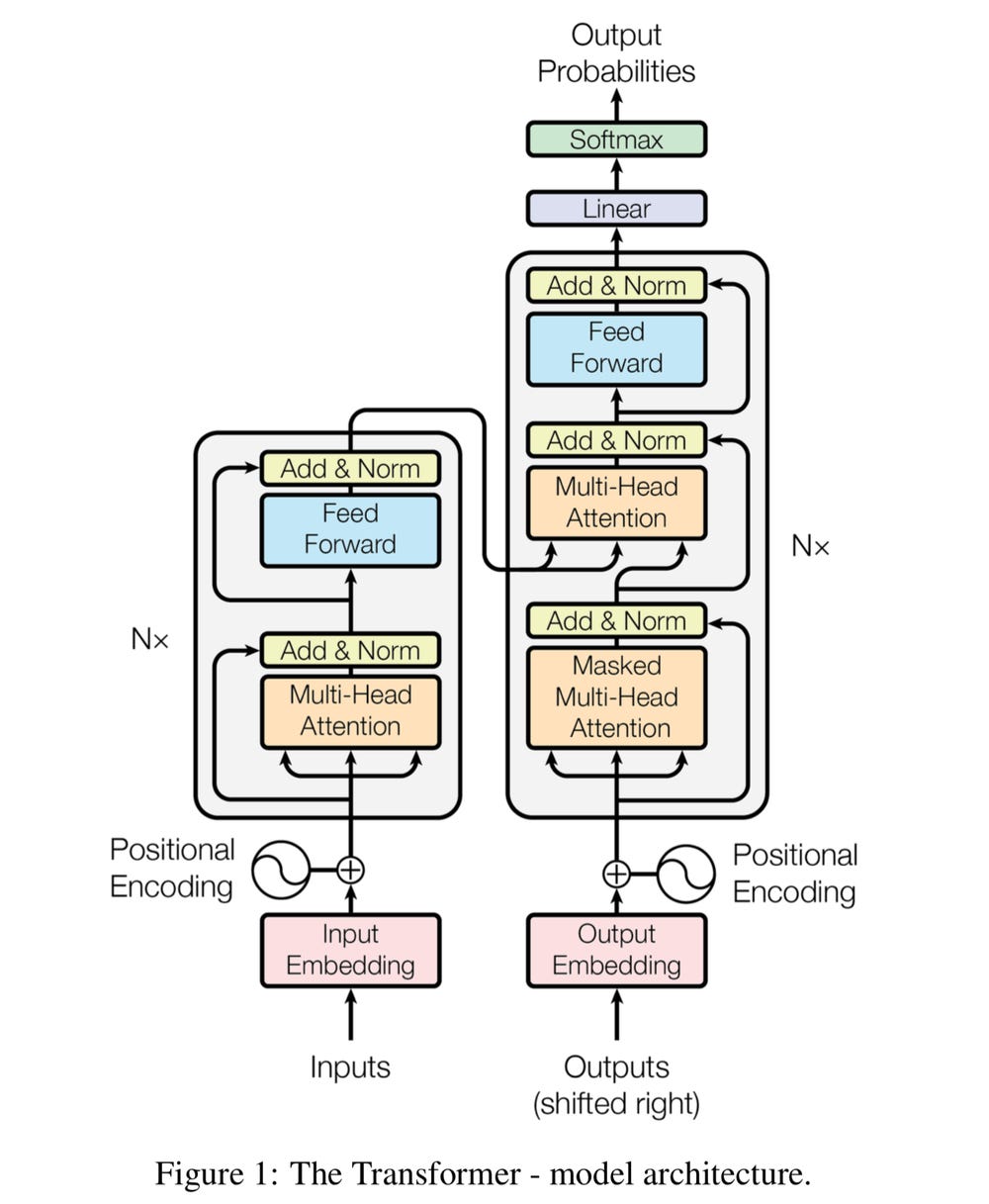

The transformer architecture comprises two main parts: the Encoder and the Decoder. The Transformer's architecture in the figure below has Encoder and Decoder blocks connected to perform sequence-to-sequence tasks like machine translation. Later research shows these blocks can be used as stand-alone models for classification or text generation tasks.

The transformer model architecture comprises the following components:

Model Input/Output - Tokenization

Embeddings

Positional Encoding

Multi-headed Attention for the Encoder and masked Multi-Head Attention for the Decoder.

Layer addition and Normalization blocks.

Feedforward neural networks

Linear and Softmax layers

Let’s have a brief overview of each of the components of the transformer architecture.

Tokenization

Models don't accept raw text as inputs. The raw input is broken down into smaller words or tokens using world-based or sub-word tokenization techniques. After Tokenization, the vocabulary is created with a list of all unique tokens from the input.

# Example of tokenization

input_text = "Tokenization converts text into tokens"

print(tokenizer.tokenize(input_text))

Out: ['token', '##ization', 'converts', 'text', 'into', 'token', '##s']We can see in the above code block example that the entire sentence is broken down into sub-words. The tokenizer used above is called the Sub-Word tokenizer, which splits the input sentence into possible smaller subwords or tokens.

After a vocabulary is created by combining all possible tokens, these tokens are encoded (or assigned) with a unique number for every token. This encoded output is fed as input to the model.

# Encode the text to get list of numbers

input_text = "Tokenization converts text into tokens"

print(tokenizer.encode(input_text))

Out: [101, 19204, 3989, 19884, 3793, 2046, 19204, 2015, 102]The input text is now broken down into tokens and then converted into numbers, ready to be fed into the model. This end-to-end process is called Tokenization.

Since the Encoder block has bi-directional attention, the entire input is fed into the model. But the Decoder block has casual or autoregressive attention, i.e. only the inputs before the target word is considered.

Token Embedding Layer

Further, these tokens are matched into a high-dimensional vector space called embeddings. Embeddings are high-order representations of every word or token in vector space. This will capture meaningful representations and relationships between words learnt during the model training.

The transformer model will convert the tokenized input tokens to embeddings. This ensures that knowledge representation about the input word is captured.

Positional Encoding Layer

Token embeddings are just higher-order representations of input words. But this doesn’t capture the order of words in the input sentence. Therefore, positional embeddings are added to the existing word embeddings. In this way, the model can preserve the word order information.

Positional embeddings are embedding representations of the index of the word as they occur in the input sequence. Therefore, when added with the word embeddings, the model will learn the order in which the word has happened in the sequence.

Understanding Self Attention

The token embedding (word embeddings) and the positional embeddings are passed to the attention layers of the Encoder/Decoder.

The attention layer will compute the self-attention weights for every word of the input to identify the relationship between the words. This is done by computing an attention map that will be learnt by the model during the training process and will define the context and dependence of every word with respect to other words in the input.

Here is an example of visualization of the self-attention mechanism in transformers created using the Bertviz library.

In the above example, the highlighted words on the left indicate the values of attention given to a corresponding word on the right. Therefore, the model can identify the reference of the word it in the input sequence and map it to the word monkey.

This ability to provide attention to the words and their relationship with other words helps the model to capture the context and consider the long-term dependencies.

Multi-Headed Attention & Masked Multi-Headed Attention

In the above example, we explored how one layer of attention is used to identify the relationship between a pronoun (i.e. it) and the subject (i.e. monkey) in the same sentence. This single layer of attention learning the pronoun-subject relationship is called an Attention head.

In the transformer architecture, there are usually multiple attention heads, i.e., 12-100 heads, present to learn and understand simultaneously different aspects and patterns of the language. These attention heads will specialize in various tasks learned during the model training. This is known as Multi-Headed Self Attention.

The Encoder block of the transformer consists of the Multi-Headed Self-attention after the positional encoding layer to learn different features, aspects, patterns of the input sequence, and various dependencies of words within the text. Since the Encoder considers the entire input sequence, i.e. both the left and right side context of a word in the input sequence, to extract features, this type of attention is called Bi-Directional attention.

Since the Decoder block mainly performs autoregressive tasks like text generation, i.e. next-word prediction by considering the past input tokens, the entire context of the input must not be considered for training, and the right side context of the word must be hidden from the attention layers.

Therefore, the right side context of the input is masked during the training of the Multi-Head self-attention layers by using the mask token. This will prevent information about the future from leaking into the past. This type of attention is called Autogregressive Attention, and the Masked Multi-Headed Self Attention Layers are used for training the Decoder of the transformer model.

Layer Addition and Normalization Layers

The Layer Addition or Residual connections add the gradient output from the attention layers and the input to the attention layer before passing them to feedforward networks. This ensures that information is not lost during training and that the vanishing gradients problem is addressed.

The Normalization layers are introduced to reduce unnecessary variations in the hidden vectors. This is done by normalizing every layer by scaling the inputs by setting unit mean and standard deviation. This also helps in speeding up the training process significantly.

Feed Forward Neural Networks

After processing through the Residual and Normalization layers, the attention layer’s outputs are passed to the fully connected feedforward layers for further post-processing of the output vector. This is primarily used to introduce the non-linearities in the model architecture and enable the deep learning magic to identify hidden patterns from the input data.

Residual and Normalization operations are again applied to the outputs of the Feedforward layers before passing it through the linear and the softmax layers.

Linear layers with Softmax activation

Finally, the outputs are passed through the linear layers to obtain the logits for every token in the vocabulary. Further, these logits are passed through the softmax activation to convert and obtain the probabilities and then choose the final predicted word based on the highest probability.

Encoder-Only Model Architecture

The architecture of the Encoder-only model includes,

Tokenization of entire inputs

Positional Encoding layer = Token embeddings + positional embeddings

Bi-directional or multi-headed attention layer

Addition and Normalization layer

Feed Forward Neural network

Linear and Softmax layers

This model type is best suited for extracting features and patterns from the input text and performing tasks like keyword extraction, named entity recognition and sentiment analysis. Examples of Encoder-only models are BERT, RoBERTa, etc.

Decoder-Only Model Architecture

The architecture of the Decoder-only model includes,

Tokenization of the right-shifted inputs

Positional Encoding layer = Token embeddings + positional embeddings

Autoregressive or Masked-headed attention

Addition and Normalization layer

Feed Forward Neural network

Linear and Softmax layers

The Decoder model type is best suited for generating text, i.e. next word prediction task. Examples of Decoder-only models are GPT2, LLama and Falcon.

Encoder-Decoder based Model Architecture

The architecture of the Encoder-Decoder based model includes both the Encoder and the Decoder layers, as mentioned above. However, the outputs of the Encoder are fed into the second multi-headed attention block of the Decoder along with the past results of the multi-headed attention block, as per the architecture of transformers shown in the figure - I.

Encoder-Decoder model architecture best suits sequence-to-sequence tasks like answering questions and machine translation. Examples of encoder-decoder models are T5 and BART.

Summary

We have a brief overview of the architecture and building blocks of the transformer model. To summarise,

Transformer architecture consists of two blocks: the Encoder and the Decoder blocks.

Converting the raw input text into tokens is known as tokenization.

Tokens are converted to high dimensional vector space called Embeddings, which better represent the inputs and relationships between the tokens.

Token embeddings are passed through the positional encoding layer to encode the positional information with token embeddings.

Multi-headed attention computes attention weights to learn different aspects of the language and the dependence of words with every word.

Multi-headed attention is present in the Encoder, whereas masked multi-headed attention is used in the Decoder.

Residual connections and layer normalization are performed to retain important information and speed up the training.

Output logits and probabilities are computed by passing through the linear layers and the softmax activation. Then, the final token is chosen based on the highest probability score.

Thanks for reading!